AI Transformation is a Problem of Governance in 2026

Let’s admit that AI is already transforming almost everything across the world; we can see the changes, the before and after “Transformation”. But do you have any idea that the most uncomfortable truth today is that many organizations are scaling AI faster, more blindly than they can control it? That is why we can say that AI transformation is a problem of governance, not capability, because we can make use of AI very well, but when it comes to control it, we all most of the times have no grip on it. You know what’s even more serious?

This is all because people are putting more effort into learning AI and leveraging AI, but only a few are focusing on AI governance responsibilities and accountability, ethics, frameworks, and regulations. It is high time to start spreading AI governance awareness across the world because only it can help us control the outcomes of AI both today and in the future. Understand that without a working AI governance framework, risk, compliance, and business accountability remain fragmented across teams.

Did you know, as per the national university, around 77% industries today are either leveraging AI or exploring the use of it in their business operations?

Still, the real failure happening now is not technical. It is organizational. Companies are deploying intelligence at scale without building the structures required to govern it at scale.

Let’s discuss why AI transformation is a problem of governance in 2026 in detail.

Why AI Governance Becomes the Weakest Link in AI Transformation?

First, let’s understand: What is AI Governance? Well, AI governance simply defines how organizations can turn powerful AI systems into controlled, accountable, and trusted operations. Interesting, right? It also helps in assigning clear AI governance responsibilities across leadership, legal, risk, and product teams, so decisions do not disappear inside technical silos. In practice, governance converts ethical intent into enforceable policies, operating standards, and approval workflows.

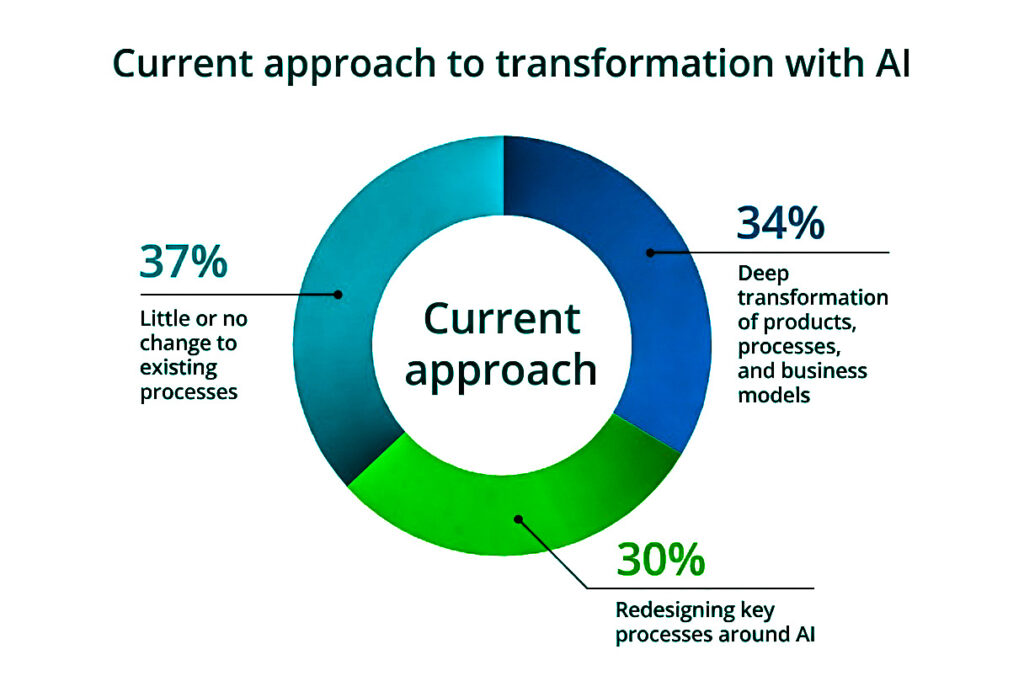

Take a glimpse on AI transformation approach of today by Deloitte 2026 report: State of AI in the Enterprise The untapped edge

Here are the 5 key factors that shows why governance becomes biggest problem in AI transformation:

1. Ownership Vacuum: AI systems cut across IT, legal, compliance, business, and operations, but no single function truly owns the outcomes. When responsibility is fragmented, clear AI governance responsibilities for risk, deployment, and escalation simply fall between teams.

2. Decision Authority Mismatch: The people who approve AI use are often not the ones accountable for its real-world impact. This disconnect creates delays, policy conflicts, and silent risk exposure when AI begins influencing customers, employees, or financial outcomes.

3. Post-Deployment Blindness: Most organizations focus heavily on approval and rollout, but lack continuous governance after systems go live. Without structured AI governance monitoring and ongoing AI governance assessment, harmful behavior, bias, and performance drift remain invisible until damage is already done.

4. Context Collapse at Scale: AI behaves differently across regions, business units, and regulatory environments. Governance fails when organizations cannot maintain AI governance contextual accuracy and consistent controls while the same models are reused globally.

5. Governance Treated as Compliance, not Infrastructure: Many enterprises still design governance as a checklist for regulators instead of an operational system embedded into product, data, and platform workflows. As a result, governance cannot keep pace with rapid experimentation and enterprise-wide scaling.

Four Critical Pillars that Strengthen Governance in AI Transformation

Here are the Four Critical Pillars that Strengthen Governance in AI Transformation:

- Executive accountability and decision ownership

AI governance only works when leadership formally owns outcomes, not just strategy. A clear executive mandate anchors the entire AI governance framework and ensures business, legal, and risk decisions are resolved at the top, not pushed down to technical teams.

- Governance embedded into delivery workflows

Policies alone cannot control fast-moving AI programs. Governance must be built directly into product design, data pipelines, and model release processes using practical ai governance tools that enforce approvals, traceability, and usage controls by default.

- Operational transparency and audit readiness

Organizations must be able to explain how AI decisions are made, how data is used, and how models change over time. Continuous documentation, logging, and review processes create defensible operations and real institutional trust.

- Organizational capability and accountability culture

Sustainable governance depends on people, not frameworks alone. Teams across business, technology, legal, and compliance must be trained to recognize risk, escalate issues early, and treat AI oversight as part of everyday operational responsibility.

Five Global Regulatory Forces Reshaping AI Governance in 2026

Here are 5 global regulatory forces shaping AI governance in 2026, starting with the EU baseline and then moving to truly international frameworks:

1. EU AI Act (global operating benchmark): The European Union has effectively set the global compliance baseline for risk classification, human oversight, documentation, and accountability. Even non-EU companies now design governance processes to meet EU standards because their AI systems touch European users, data, or markets.

2. OECD AI Principles (global policy alignment layer): The Organization for Economic Co-operation and Development principles are now widely embedded into national and enterprise AI policies, pushing organizations to formalize transparency, robustness, and responsible deployment practices as part of enterprise governance programs.

3. UNESCO Global AI Ethics Framework: The UNESCO framework drives global expectations around human rights, social impact, and institutional responsibility, reinforcing that ai ethics and governance must be operationalized through policy, training, and decision oversight structures.

4. Council of Europe AI Convention (cross-border legal governance): The Council of Europe convention introduces a binding international governance layer focused on accountability, risk prevention, and safeguards for automated decision systems used across borders.

5. G7 Hiroshima AI Process (global coordination on governance standards): The G7 Hiroshima AI Process has accelerated international coordination on safety, transparency, and responsibility expectations, pushing enterprises toward common governance practices and shared reporting structures for high-impact AI systems.

Wrap Up

AI transformation ultimately fails or succeeds at the governance layer. Technology can scale instantly, but accountability cannot. Without clear AI governance responsibilities, embedded controls, and a practical AI governance framework, organizations cannot sustain trust, compliance, or business reliability. As global regulations now demand real oversight, governance is no longer optional support. It is the operating foundation of enterprise AI. Companies that build governance as infrastructure will scale safely. The rest will scale risk.

In the next phase of AI adoption, leadership will be defined not by how fast organizations deploy AI, but by how responsibly and consistently they govern it at scale.

1 comment